Fraud, IDV and AML: One Problem, Three Languages and AI Misalignment

More organizations are waking up to a costly truth: they are fighting the same underlying risk while speaking three entirely different languages. What once felt like a manageable internal disconnect is now translating directly into financial loss, operational inefficiency, and regulatory exposure.

Globally, banks and fintechs spend an estimated $206 billion annually on financial crime compliance. And that figure doesn’t even account for adjacent regulated sectors like iGaming. Despite this massive investment, fraud losses continue to rise, and compliance penalties show no signs of slowing down. The conclusion is hard to ignore: more effort is not producing better outcomes because it is not producing shared understanding.

Nowhere is this more visible than in identity-related crime. In the United States alone, over 1.1 million identity fraud reports were filed last year. Identity is no longer just a checkpoint in the customer journey — it has become the central battlefield.

Inside most organizations, however, the response remains fragmented. Fraud teams are refining behavioral models and anomaly detection systems. Compliance teams are expanding transaction monitoring and account reviews. Identity verification (IDV) teams are deploying new document checks, biometrics, and liveness detection as part of broader KYC programs — all increasingly powered by AI.

But as AI-driven attacks evolve — from synthetic identities to face-swap-based impersonation — the gap between these teams is no longer just a cultural inconvenience. It has become a structural vulnerability.

One Risk Surface, Three Mental Models

On the surface, fraud, AML, and IDV teams are all analyzing the same entity: the customer. They observe the same critical touchpoints — onboarding, login, transactions, withdrawals. Yet they interpret and act on these moments through fundamentally different lenses.

- Fraud teams optimize for loss prevention and abuse reduction

- AML teams focus on regulatory exposure, sanctions, and money laundering risk

- IDV teams prioritize speed, conversion, and verification success rates

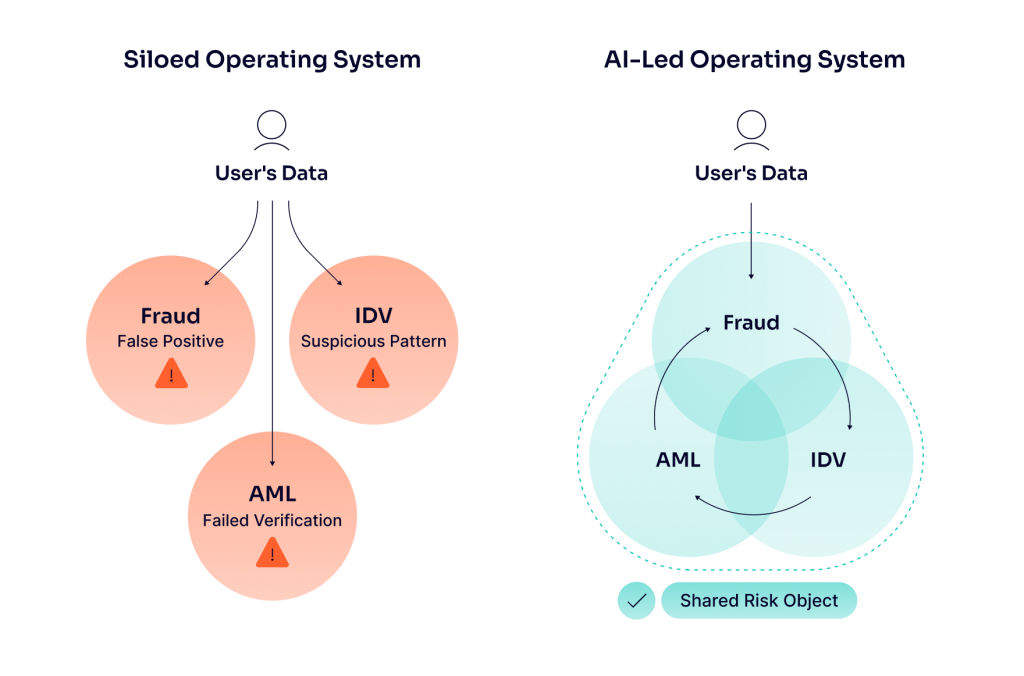

Each function builds its own systems, metrics, and escalation logic around its mandate. The result is predictable but dangerous: the same risky event can be classified in three different ways.

A single session might be:

- A false positive for fraud

- A failed verification for IDV

- A suspicious pattern for AML

All three interpretations can exist simultaneously — each technically correct within its own framework, yet collectively misaligned.

This divergence once made sense. Systems were separate, data was siloed, and regulatory expectations were segmented. But that world no longer exists.

Today, the entire financial crime stack is converging around a shared, identity-driven risk surface — the moment where you decide:

- Who the user is

- How much you trust them

- What they are allowed to do next

Despite this convergence, language, incentives, and operational models have not evolved. That is why a suspicious onboarding, a high-risk login, and a questionable transaction often trigger three independent investigations instead of one unified assessment of the same risk.

Where AI Amplifies the Problem — or Solves It

AI is no longer experimental in financial crime programs — it is foundational.

- Fraud teams deploy AI across device intelligence, behavioral biometrics, and transaction streams

- IDV teams use AI for document verification, facial recognition, and liveness detection

- AML teams rely on machine learning for transaction monitoring, name screening, and case triage

Individually, these systems are powerful. Collectively, they are often disconnected.

Each team trains models on its own datasets, defines its own labels, and builds its own understanding of what “suspicious” means. A failed selfie, an unusual payment route, a sanctions hit, or a risky login can all be logged differently — even when they occur within the same session, for the same user, at the same moment.

The consequences are significant:

- Duplicate investigations

- Conflicting decisions

- Blind spots across the customer lifecycle

- Increased operational costs

- Slower customer experiences

- Diffused accountability

AI, in this setup, doesn’t eliminate fragmentation — it hardcodes it.

The real advantage of AI only emerges when risk is treated as a shared surface, not a collection of isolated problems.

This requires a shift from siloed AI tools to an AI-led operating system — one where all signals are understood in context, across functions.

Foundations like the Model Context Protocol (MCP) become critical here. They ensure that data — regardless of where it originates — is structured, interpretable, and reusable across systems.

In a unified environment:

- Device intelligence can inform both fraud and AML decisions

- Behavioral patterns from onboarding can influence transaction risk scoring

- IDV outcomes can shape trust levels across the entire lifecycle

Instead of competing models, you get complementary intelligence.

Standardized definitions and shared event taxonomies ensure that a “high-risk login” or “onboarding anomaly” carries the same meaning — whether viewed from a fraud dashboard, an AML system, or an IDV report.

Building a Shared Risk Language and Operating Model

Alignment does not require restructuring the organization. It requires standardizing how risk is defined, measured, and acted upon.

Leading institutions are moving toward a common financial crime taxonomy — a unified framework of:

- Risk categories

- Event types

- Severity levels

This taxonomy sits above individual functions, allowing fraud, AML, and IDV teams to map their controls and alerts onto a shared backbone.

In practice, this means:

- Defining once what constitutes high-risk onboarding, login, or payment behavior

- Allowing teams to apply their own thresholds without redefining the core event

- Aligning on escalation triggers versus monitoring signals across all systems

From here, organizations can build around a shared risk object — a unified profile that combines:

- Onboarding and KYC data

- IDV outcomes

- Device and behavioral signals

- Transactional activity

This creates a single, evolving view of the customer or session.

Unified case management then brings this to life:

- Fraud and AML alerts are investigated in the same workspace

- Duplicate efforts are eliminated

- Blended risk patterns become visible

Within this model, IDV becomes the critical bridge. It is the earliest structured interaction with the user and produces high-confidence signals about identity. These signals should not be re-collected or reinterpreted downstream — they should be reused across fraud and AML workflows.

But frameworks and systems alone are not enough.

A shared language only works if it is embedded into the operating rhythm of the organization:

- Joint risk reviews across key journeys (onboarding, login, first payment)

- Cross-functional escalation runbooks

- Regular forums where fraud, AML, and IDV leaders evaluate the same metrics and model outputs

Consistency is not achieved through tools — it is reinforced through behavior.

From Fragmentation to a Unified Risk Narrative

The next generation of financial crime will not defeat organizations because their models are outdated. It will exploit the gaps between those models.

It will succeed where teams cannot agree on what they are seeing.

Regulators, boards, and customers are converging on a single expectation:

- One unified view of risk

- One coherent narrative

- One accountable system — regardless of where the signal originated

This makes language a strategic asset.

If an organization cannot describe a risky onboarding, login, or transaction in consistent terms across fraud, IDV, and AML, it cannot:

- Govern it effectively

- Automate it reliably

- Explain it when something goes wrong

And in a regulatory environment that increasingly demands transparency and accountability, that is not just inefficient — it is unacceptable.

Fragmented risk language is no longer a minor operational flaw.

It is a systemic weakness.

And in today’s landscape, it is one that organizations can no longer afford.